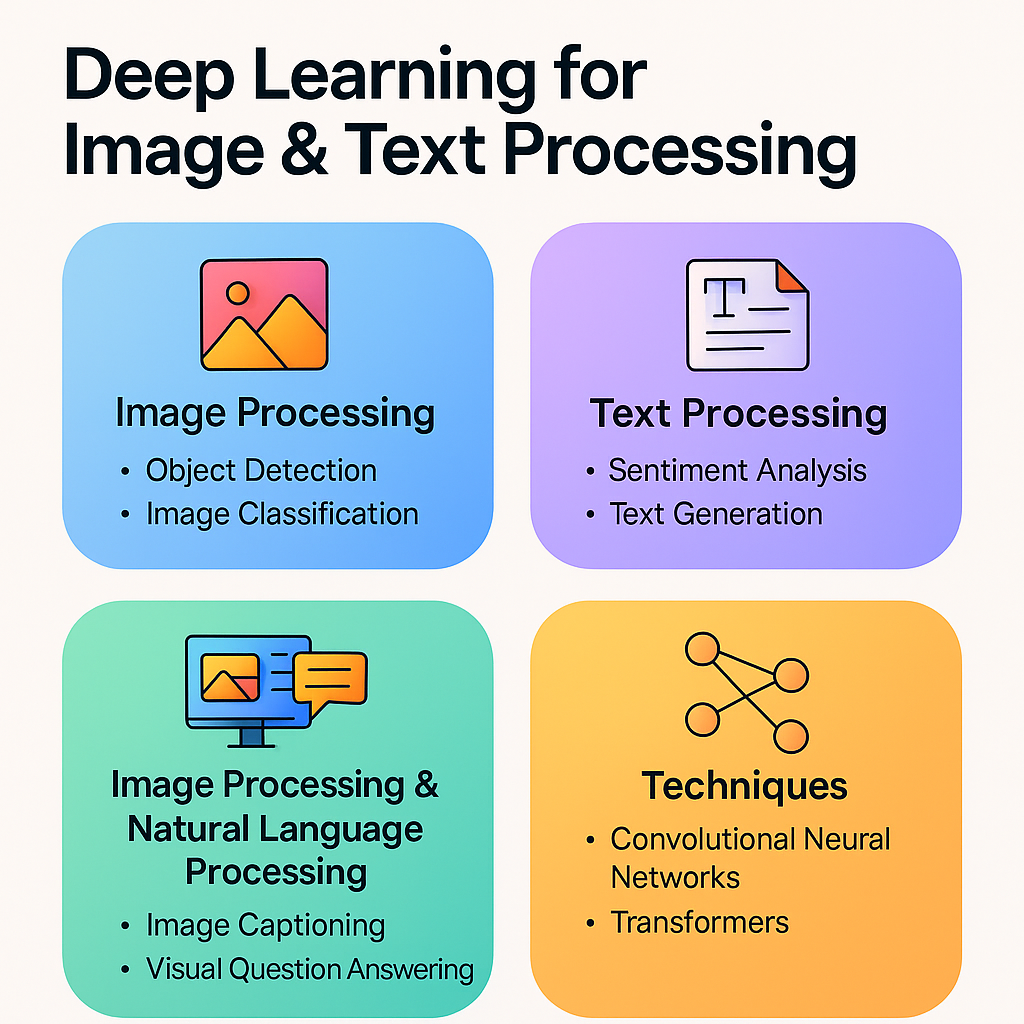

Deep Learning for Image and Text Processing has revolutionized how machines interpret, understand, and generate human-like information. The integration of computer vision and natural language processing (NLP) into everyday applications has fundamentally reshaped industries such as healthcare, automotive, marketing, security, finance, entertainment, and education. Image processing enables machines to identify objects, detect patterns, analyze scenes, and even generate new visual content. Text processing allows models to understand human language, translate between dialects, generate context-aware responses, analyze sentiments, or summarize large documents. Together, image and text processing form the foundation of intelligent AI systems capable of perceiving, reasoning, and interacting with humans at a new level of sophistication. With deep learning, raw pixel data and unstructured sentences are transformed into patterns, features, and insights that drive automation, predictions, and decision-making on a massive scale.

The use of Convolutional Neural Networks (CNNs) has enabled groundbreaking advancements in image recognition tasks. CNNs extract hierarchical features—edges, textures, shapes, and patterns—from images, allowing models to classify objects, detect anomalies, and segment scenes accurately. From medical imaging that identifies tumors to autonomous vehicles detecting pedestrians and traffic signs, CNN-based models have become the backbone of modern computer vision. Transfer learning with pre-trained networks like ResNet, VGG, and EfficientNet has further accelerated adoption, enabling developers to train high-performance models with limited data. Deep learning models now outperform humans in several vision tasks, such as classifying complex images or detecting subtle anomalies in X-rays, CT scans, and MRI images. The rise of Generative Adversarial Networks (GANs) has brought image synthesis, style transfer, and realistic visual generation to entirely new levels, further expanding the boundaries of what machines can create visually.

In natural language processing, deep learning architectures like Recurrent Neural Networks (RNNs), Long Short-Term Memory (LSTM) networks, and Transformer models have transformed how machines interpret human language. Traditional rule-based NLP struggled with context, ambiguity, and linguistic complexity. Deep learning, especially through Transformers such as BERT, GPT, and T5, has enabled machines to understand nuance, tone, semantics, and long-range dependencies in text. These models power everyday interactions—chatbots, virtual assistants, sentiment analysis tools, translation systems, automated customer service, and intelligent writing assistants. With massive datasets and self-supervised training, language models can now generate human-like text, explain reasoning, summarize lengthy documents, and even answer complex questions based on context. The shift from sequential models to attention-based Transformers has significantly increased accuracy, speed, and contextual understanding in NLP systems.

Combining image and text processing unlocks advanced multimodal AI capabilities. Models such as CLIP, BLIP, and Vision Transformers (ViT) paired with language models allow machines to interpret visual information in natural language. This fusion enables tasks such as image captioning, visual question answering (VQA), content moderation, scene understanding, and multimodal search. For instance, an AI model can look at an image and generate a textual description like “A child riding a bicycle in a park,” or answer questions such as “How many dogs are in the picture?” These models learn complex relationships between words and visual features, allowing them to interpret images not just as pixel grids but as meaningful scenes. Multimodal AI is also used in document understanding, where systems read images of forms, extract text, classify fields, and convert unstructured documents into usable digital data.

Deep learning in image and text processing is heavily driven by large datasets, GPU computing, and self-supervised learning. Datasets like ImageNet, COCO, and OpenImages have enabled breakthroughs in computer vision, while language datasets like Wikipedia, Common Crawl, and BooksCorpus power NLP advancements. Self-supervised learning reduces the need for labeled data, allowing models to learn from unlabeled images, videos, and raw text. For example, contrastive learning teaches models to distinguish similarities between different views of the same image. In NLP, masked language modeling trains models to predict missing words, enhancing contextual understanding. This shift has democratized AI, allowing smaller teams to build high-quality deep learning applications without requiring massive manual labeling efforts.

Industries across the world rely on deep learning for automation and decision-making. In healthcare, image models detect diseases in radiology scans, analyze pathology slides, and assist in early diagnosis. In retail and e-commerce, NLP models analyze customer sentiment, recommend products, and automate support. Security systems use facial recognition and OCR technologies for authentication and monitoring. Financial institutions utilize deep learning for fraud detection, risk analysis, and document verification. Autonomous vehicles use computer vision to perceive their environment, while NLP models help process voice commands. Entertainment platforms generate personalized content recommendations and caption videos using multimodal models. Deep learning has become the invisible force powering intelligent, seamless, and personalized digital experiences.

Despite its immense potential, deep learning comes with challenges related to bias, interpretability, computational cost, and data privacy. Vision models can misinterpret images due to biased training data, while language models may generate incorrect or harmful content if not properly controlled. High-performance deep learning requires powerful GPUs, which increases infrastructure costs. Processing sensitive medical or financial data brings privacy concerns that require encryption, secure data pipelines, and regulatory compliance. Explainability remains a key challenge—while deep models achieve high accuracy, understanding why they make decisions is often difficult. Organizations now focus on explainable AI (XAI), privacy-preserving ML, federated learning, and ethical AI standards to address these concerns responsibly.

The future of deep learning for image and text processing will be defined by multimodal intelligence, edge AI, and foundation models. With models capable of processing text, images, audio, and video simultaneously, AI will become more human-like in perception and reasoning. Edge AI will bring real-time image and language processing to smartphones, IoT devices, and autonomous machines without relying heavily on cloud servers. Foundation models—trained on massive datasets—will serve as universal engines powering countless specialized applications. Advances in low-code AI, self-supervised learning, and automated model optimization will make deep learning more accessible to businesses of all sizes. As deep learning evolves, the synergy between image and text processing will create intelligent systems capable of transforming industries and enhancing every aspect of digital life.

Ultimately, deep learning for image and text processing represents the next major wave of artificial intelligence innovation. It bridges perception and language, enabling machines to understand the world visually and linguistically. This dual capability opens the door to smarter cities, safer transportation, automated workflows, efficient healthcare, personalized user experiences, and more intuitive human–AI interactions. As technology continues to evolve, deep learning will expand its influence, shaping a future where intelligent systems collaborate with humans seamlessly and responsibly. Organizations prepared to adopt these technologies today will lead innovation, build better products, and unlock the full potential of AI in an increasingly connected world.

The use of Convolutional Neural Networks (CNNs) has enabled groundbreaking advancements in image recognition tasks. CNNs extract hierarchical features—edges, textures, shapes, and patterns—from images, allowing models to classify objects, detect anomalies, and segment scenes accurately. From medical imaging that identifies tumors to autonomous vehicles detecting pedestrians and traffic signs, CNN-based models have become the backbone of modern computer vision. Transfer learning with pre-trained networks like ResNet, VGG, and EfficientNet has further accelerated adoption, enabling developers to train high-performance models with limited data. Deep learning models now outperform humans in several vision tasks, such as classifying complex images or detecting subtle anomalies in X-rays, CT scans, and MRI images. The rise of Generative Adversarial Networks (GANs) has brought image synthesis, style transfer, and realistic visual generation to entirely new levels, further expanding the boundaries of what machines can create visually.

In natural language processing, deep learning architectures like Recurrent Neural Networks (RNNs), Long Short-Term Memory (LSTM) networks, and Transformer models have transformed how machines interpret human language. Traditional rule-based NLP struggled with context, ambiguity, and linguistic complexity. Deep learning, especially through Transformers such as BERT, GPT, and T5, has enabled machines to understand nuance, tone, semantics, and long-range dependencies in text. These models power everyday interactions—chatbots, virtual assistants, sentiment analysis tools, translation systems, automated customer service, and intelligent writing assistants. With massive datasets and self-supervised training, language models can now generate human-like text, explain reasoning, summarize lengthy documents, and even answer complex questions based on context. The shift from sequential models to attention-based Transformers has significantly increased accuracy, speed, and contextual understanding in NLP systems.

Combining image and text processing unlocks advanced multimodal AI capabilities. Models such as CLIP, BLIP, and Vision Transformers (ViT) paired with language models allow machines to interpret visual information in natural language. This fusion enables tasks such as image captioning, visual question answering (VQA), content moderation, scene understanding, and multimodal search. For instance, an AI model can look at an image and generate a textual description like “A child riding a bicycle in a park,” or answer questions such as “How many dogs are in the picture?” These models learn complex relationships between words and visual features, allowing them to interpret images not just as pixel grids but as meaningful scenes. Multimodal AI is also used in document understanding, where systems read images of forms, extract text, classify fields, and convert unstructured documents into usable digital data.

Deep learning in image and text processing is heavily driven by large datasets, GPU computing, and self-supervised learning. Datasets like ImageNet, COCO, and OpenImages have enabled breakthroughs in computer vision, while language datasets like Wikipedia, Common Crawl, and BooksCorpus power NLP advancements. Self-supervised learning reduces the need for labeled data, allowing models to learn from unlabeled images, videos, and raw text. For example, contrastive learning teaches models to distinguish similarities between different views of the same image. In NLP, masked language modeling trains models to predict missing words, enhancing contextual understanding. This shift has democratized AI, allowing smaller teams to build high-quality deep learning applications without requiring massive manual labeling efforts.

Industries across the world rely on deep learning for automation and decision-making. In healthcare, image models detect diseases in radiology scans, analyze pathology slides, and assist in early diagnosis. In retail and e-commerce, NLP models analyze customer sentiment, recommend products, and automate support. Security systems use facial recognition and OCR technologies for authentication and monitoring. Financial institutions utilize deep learning for fraud detection, risk analysis, and document verification. Autonomous vehicles use computer vision to perceive their environment, while NLP models help process voice commands. Entertainment platforms generate personalized content recommendations and caption videos using multimodal models. Deep learning has become the invisible force powering intelligent, seamless, and personalized digital experiences.

Despite its immense potential, deep learning comes with challenges related to bias, interpretability, computational cost, and data privacy. Vision models can misinterpret images due to biased training data, while language models may generate incorrect or harmful content if not properly controlled. High-performance deep learning requires powerful GPUs, which increases infrastructure costs. Processing sensitive medical or financial data brings privacy concerns that require encryption, secure data pipelines, and regulatory compliance. Explainability remains a key challenge—while deep models achieve high accuracy, understanding why they make decisions is often difficult. Organizations now focus on explainable AI (XAI), privacy-preserving ML, federated learning, and ethical AI standards to address these concerns responsibly.

The future of deep learning for image and text processing will be defined by multimodal intelligence, edge AI, and foundation models. With models capable of processing text, images, audio, and video simultaneously, AI will become more human-like in perception and reasoning. Edge AI will bring real-time image and language processing to smartphones, IoT devices, and autonomous machines without relying heavily on cloud servers. Foundation models—trained on massive datasets—will serve as universal engines powering countless specialized applications. Advances in low-code AI, self-supervised learning, and automated model optimization will make deep learning more accessible to businesses of all sizes. As deep learning evolves, the synergy between image and text processing will create intelligent systems capable of transforming industries and enhancing every aspect of digital life.

Ultimately, deep learning for image and text processing represents the next major wave of artificial intelligence innovation. It bridges perception and language, enabling machines to understand the world visually and linguistically. This dual capability opens the door to smarter cities, safer transportation, automated workflows, efficient healthcare, personalized user experiences, and more intuitive human–AI interactions. As technology continues to evolve, deep learning will expand its influence, shaping a future where intelligent systems collaborate with humans seamlessly and responsibly. Organizations prepared to adopt these technologies today will lead innovation, build better products, and unlock the full potential of AI in an increasingly connected world.