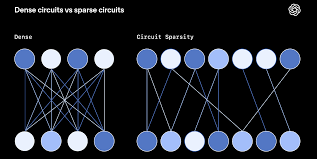

Sparse neural networks are designed to reduce the number of active parameters while preserving, and often improving, overall model performance. Unlike dense models where every neuron is connected, sparse networks retain only the most important connections. This design leads to more efficient, scalable, and practical AI systems, especially as model sizes continue to grow.

In sparse neural networks, unnecessary or low-impact weights are removed, leaving behind a compact structure focused on the most relevant features. By eliminating redundant connections, the model concentrates its capacity on meaningful patterns rather than distributing effort across all parameters. This selective connectivity enables strong performance with fewer resources.

One of the major benefits of sparsity is a significant reduction in memory usage and computational cost. Fewer parameters mean smaller model sizes, faster inference, and lower training overhead. These efficiency gains make sparse models particularly attractive for large-scale deployments and real-time applications.

Sparse neural networks are especially well-suited for edge devices and resource-constrained environments. Mobile devices, IoT systems, and embedded hardware benefit from reduced memory footprints and lower power consumption. This enables advanced AI capabilities to run locally without relying heavily on cloud infrastructure.

Several techniques enable sparsity in neural networks. Pruning methods remove less important weights after or during training, while sparse initialization starts training with a limited number of connections. Other approaches dynamically adjust sparsity levels as training progresses, balancing efficiency and accuracy.

Beyond efficiency, sparse networks improve interpretability. By highlighting critical connections and features, these models make it easier to understand which inputs most influence predictions. This transparency is valuable in applications where explainability and trust are important.

Sparsity also helps reduce overfitting by eliminating unnecessary complexity. By focusing on essential parameters, sparse models generalize better to unseen data. This makes them particularly effective in scenarios with limited training data or high-dimensional input spaces.

Advances in hardware acceleration further support the adoption of sparse neural networks. Modern GPUs, TPUs, and specialized AI accelerators increasingly offer optimized support for sparse computation, enabling faster execution and better utilization of hardware resources.

Overall, sparse neural networks enable high-performance AI with lower energy consumption and reduced resource requirements. By combining efficiency, interpretability, and scalability, sparsity plays a crucial role in building sustainable and accessible artificial intelligence systems.

In sparse neural networks, unnecessary or low-impact weights are removed, leaving behind a compact structure focused on the most relevant features. By eliminating redundant connections, the model concentrates its capacity on meaningful patterns rather than distributing effort across all parameters. This selective connectivity enables strong performance with fewer resources.

One of the major benefits of sparsity is a significant reduction in memory usage and computational cost. Fewer parameters mean smaller model sizes, faster inference, and lower training overhead. These efficiency gains make sparse models particularly attractive for large-scale deployments and real-time applications.

Sparse neural networks are especially well-suited for edge devices and resource-constrained environments. Mobile devices, IoT systems, and embedded hardware benefit from reduced memory footprints and lower power consumption. This enables advanced AI capabilities to run locally without relying heavily on cloud infrastructure.

Several techniques enable sparsity in neural networks. Pruning methods remove less important weights after or during training, while sparse initialization starts training with a limited number of connections. Other approaches dynamically adjust sparsity levels as training progresses, balancing efficiency and accuracy.

Beyond efficiency, sparse networks improve interpretability. By highlighting critical connections and features, these models make it easier to understand which inputs most influence predictions. This transparency is valuable in applications where explainability and trust are important.

Sparsity also helps reduce overfitting by eliminating unnecessary complexity. By focusing on essential parameters, sparse models generalize better to unseen data. This makes them particularly effective in scenarios with limited training data or high-dimensional input spaces.

Advances in hardware acceleration further support the adoption of sparse neural networks. Modern GPUs, TPUs, and specialized AI accelerators increasingly offer optimized support for sparse computation, enabling faster execution and better utilization of hardware resources.

Overall, sparse neural networks enable high-performance AI with lower energy consumption and reduced resource requirements. By combining efficiency, interpretability, and scalability, sparsity plays a crucial role in building sustainable and accessible artificial intelligence systems.