Neural Networks are a core concept in Artificial Intelligence and Machine Learning. They are inspired by the human brain and designed to help machines learn patterns, make decisions, and understand complex data. Just like the brain has neurons connected together, a neural network has artificial neurons—called nodes—that are arranged in layers. These layers work together to process information, learn from data, and improve accuracy over time. Neural networks are used everywhere today: in speech recognition (like Siri or Google Assistant), face detection, recommendation systems, self-driving cars, medical diagnosis, and more. They are powerful because they can learn directly from data without needing manually written rules.

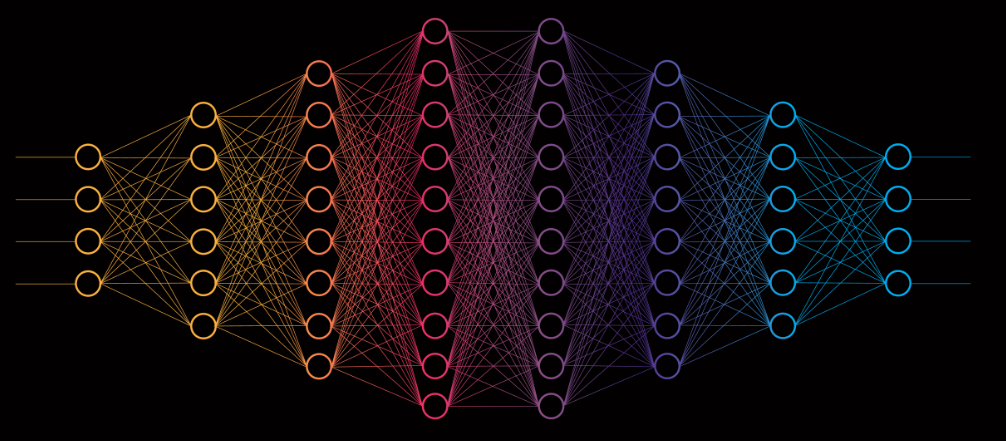

A basic neural network contains three main layers: the input layer, hidden layers, and output layer. The input layer receives raw data—for example, pixels of an image or text converted into numbers. The hidden layers process this information using weights and activation functions. Weights determine how important a particular input is, while activation functions help the network understand complex relationships. The output layer provides the final result, such as predicting a class (“cat” or “dog”), generating a score, or answering a question. Neural networks learn through a process called training, where the network adjusts its weights based on errors. This happens using backpropagation, an algorithm that calculates how wrong the network’s prediction was and updates the weights to reduce future errors. Over many training cycles, called epochs, the network becomes better at recognizing patterns.

There are many types of neural networks, each designed for specific tasks. Feedforward Neural Networks (FNN) are the simplest form where information moves in one direction—from input to output. They are used for basic prediction and classification tasks. Convolutional Neural Networks (CNNs) are widely used for image processing because they can detect patterns like edges, shapes, or textures. This makes them ideal for facial recognition, medical imaging, and object detection. Recurrent Neural Networks (RNNs) handle sequence-based data like text, speech, or time-series information. They remember previous inputs, which helps in language translation or speech recognition. Another advanced type is Transformers, the architecture used in modern AI models like ChatGPT, Google Bard, and other large language models. Transformers can understand long text sequences and relationships between words, making them extremely powerful.

Neural networks are popular because they offer accuracy, flexibility, and the ability to learn from huge amounts of data. However, they also require large datasets, powerful hardware (GPUs/TPUs), and careful tuning. Challenges include overfitting (when the model memorizes instead of learning), bias in data, and high computational cost. Researchers use techniques like dropout, regularization, batch normalization, and data augmentation to solve these issues. Despite challenges, neural networks continue to evolve rapidly and play a major role in building intelligent systems.

A basic neural network contains three main layers: the input layer, hidden layers, and output layer. The input layer receives raw data—for example, pixels of an image or text converted into numbers. The hidden layers process this information using weights and activation functions. Weights determine how important a particular input is, while activation functions help the network understand complex relationships. The output layer provides the final result, such as predicting a class (“cat” or “dog”), generating a score, or answering a question. Neural networks learn through a process called training, where the network adjusts its weights based on errors. This happens using backpropagation, an algorithm that calculates how wrong the network’s prediction was and updates the weights to reduce future errors. Over many training cycles, called epochs, the network becomes better at recognizing patterns.

There are many types of neural networks, each designed for specific tasks. Feedforward Neural Networks (FNN) are the simplest form where information moves in one direction—from input to output. They are used for basic prediction and classification tasks. Convolutional Neural Networks (CNNs) are widely used for image processing because they can detect patterns like edges, shapes, or textures. This makes them ideal for facial recognition, medical imaging, and object detection. Recurrent Neural Networks (RNNs) handle sequence-based data like text, speech, or time-series information. They remember previous inputs, which helps in language translation or speech recognition. Another advanced type is Transformers, the architecture used in modern AI models like ChatGPT, Google Bard, and other large language models. Transformers can understand long text sequences and relationships between words, making them extremely powerful.

Neural networks are popular because they offer accuracy, flexibility, and the ability to learn from huge amounts of data. However, they also require large datasets, powerful hardware (GPUs/TPUs), and careful tuning. Challenges include overfitting (when the model memorizes instead of learning), bias in data, and high computational cost. Researchers use techniques like dropout, regularization, batch normalization, and data augmentation to solve these issues. Despite challenges, neural networks continue to evolve rapidly and play a major role in building intelligent systems.