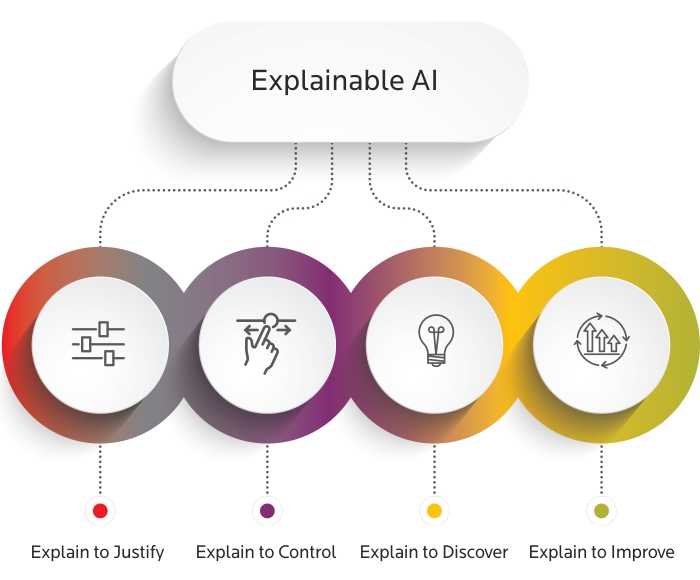

Model Explainability (XAI) and Fairness are essential components of responsible AI development. As machine learning models become more complex—especially deep learning—understanding how decisions are made becomes challenging. This course teaches how to uncover the logic behind AI predictions and ensure models treat all users fairly, without hidden biases or discrimination.

The course begins by exploring why traditional black-box models are difficult to interpret. Students learn about transparency requirements in industries such as finance, insurance, and healthcare, where decisions must be explainable for auditing, regulatory compliance, and user trust. XAI methods help bridge the gap between model accuracy and human understanding.

Learners dive into key explainability tools and techniques including feature importance, SHAP values, LIME, partial dependency plots, and counterfactual explanations. These methods break down ML predictions into simple, interpretable components that stakeholders can validate and trust. Hands-on labs demonstrate how to apply XAI techniques across real datasets.

AI fairness is another major focus. Students identify potential sources of bias—such as biased training data, imbalanced representation, or discriminatory historical patterns—and learn how these can create unfair predictions. Fairness metrics like demographic parity, equalized odds, and disparate impact are introduced to quantify inequality and guide improvements.

Regulatory frameworks and global ethics standards are discussed, including GDPR, AI Act, and Responsible AI principles. Organizations must justify automated decisions, especially when they impact loans, hiring, medical access, or legal judgments. This course empowers learners to incorporate compliance and ethical design early in the ML lifecycle.

Fairness-aware model training strategies are covered to reduce discriminatory behavior. Techniques such as bias mitigation, data rebalancing, adversarial fairness methods, and privacy-preserving ML help ensure inclusive performance. Students explore fairness dashboards for continuous monitoring of model behavior in production environments.

Explainability enables better collaboration between data scientists, business teams, and end users. Clear insights allow companies to refine products, align with societal values, and reduce legal risk. The course highlights real-world cases where lack of explainability caused public trust failures—emphasizing the need for transparency in critical systems.

Emerging trends such as interpretable-by-design models and multi-modal explanation tools are introduced. Learners will examine how generative AI systems bring new explainability challenges and research directions, including natural language explanations that simplify complex reasoning.

By completing this course, learners will gain the ability to build AI systems that are fair, interpretable, and accountable. They will be skilled at detecting bias, explaining model predictions, and designing trustworthy AI solutions suited for both ethical and enterprise needs.

The course begins by exploring why traditional black-box models are difficult to interpret. Students learn about transparency requirements in industries such as finance, insurance, and healthcare, where decisions must be explainable for auditing, regulatory compliance, and user trust. XAI methods help bridge the gap between model accuracy and human understanding.

Learners dive into key explainability tools and techniques including feature importance, SHAP values, LIME, partial dependency plots, and counterfactual explanations. These methods break down ML predictions into simple, interpretable components that stakeholders can validate and trust. Hands-on labs demonstrate how to apply XAI techniques across real datasets.

AI fairness is another major focus. Students identify potential sources of bias—such as biased training data, imbalanced representation, or discriminatory historical patterns—and learn how these can create unfair predictions. Fairness metrics like demographic parity, equalized odds, and disparate impact are introduced to quantify inequality and guide improvements.

Regulatory frameworks and global ethics standards are discussed, including GDPR, AI Act, and Responsible AI principles. Organizations must justify automated decisions, especially when they impact loans, hiring, medical access, or legal judgments. This course empowers learners to incorporate compliance and ethical design early in the ML lifecycle.

Fairness-aware model training strategies are covered to reduce discriminatory behavior. Techniques such as bias mitigation, data rebalancing, adversarial fairness methods, and privacy-preserving ML help ensure inclusive performance. Students explore fairness dashboards for continuous monitoring of model behavior in production environments.

Explainability enables better collaboration between data scientists, business teams, and end users. Clear insights allow companies to refine products, align with societal values, and reduce legal risk. The course highlights real-world cases where lack of explainability caused public trust failures—emphasizing the need for transparency in critical systems.

Emerging trends such as interpretable-by-design models and multi-modal explanation tools are introduced. Learners will examine how generative AI systems bring new explainability challenges and research directions, including natural language explanations that simplify complex reasoning.

By completing this course, learners will gain the ability to build AI systems that are fair, interpretable, and accountable. They will be skilled at detecting bias, explaining model predictions, and designing trustworthy AI solutions suited for both ethical and enterprise needs.