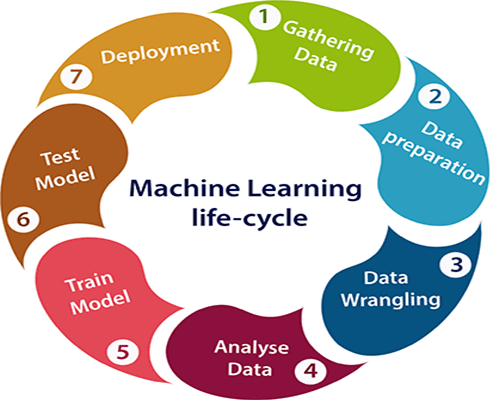

The lifecycle of machine learning models describes the complete journey a model undergoes from conception to retirement. Unlike traditional software, ML models are dynamic systems that depend heavily on data quality, environmental changes, and continuous monitoring. Understanding the lifecycle ensures that models remain accurate, reliable, and aligned with business goals throughout their operational lifetime.

The process begins with problem definition, where teams clarify objectives, success metrics, and the feasibility of using machine learning to address the challenge. This early step establishes the direction for data collection, model selection, and evaluation strategies. Without a well-defined scope, ML projects often struggle with unclear expectations and inconsistent outcomes.

Data collection and preparation form the next stage. Raw data is gathered from various sources, cleaned, labeled, transformed, and engineered into meaningful features. High-quality data is the foundation of high-performance models. This stage often takes the most time because inconsistencies, missing values, and biases must be addressed before training begins.

Model development follows, where data scientists experiment with algorithms, tune hyperparameters, and evaluate different architectures. Experiment tracking plays a crucial role in documenting results and ensuring reproducibility. Models are assessed using appropriate metrics, cross-validation techniques, and testing pipelines to confirm their validity.

After the model is deemed fit for deployment, the focus shifts to operationalization. Deployment strategies vary depending on the system’s requirements—batch processing, streaming pipelines, or real-time APIs. Integration with existing infrastructure must be seamless to ensure low latency, consistent outputs, and scalability. This stage often requires close collaboration between data science, engineering, and DevOps teams.

Once deployed, models enter the monitoring phase. Real-world data can differ significantly from training data, causing performance degradation. Monitoring tools track accuracy, drift, latency, and resource usage. Alerts help teams detect anomalies early, preventing harmful predictions and ensuring that the model continues to function as expected.

Retraining and updates are essential as the environment evolves. Models may require fresh data, new features, or algorithmic adjustments to maintain performance. Retraining cycles can be periodic or triggered automatically when drift thresholds are exceeded. Versioning ensures that teams can roll back to previous models if needed.

Governance and documentation run throughout the lifecycle. Ethical considerations, compliance requirements, and audit trails ensure that models meet regulatory standards and organizational policies. Clear documentation of data sources, assumptions, risks, and design choices increases transparency and accountability.

Eventually, models reach a point where they no longer serve their purpose or become outdated due to better alternatives. Decommissioning involves retiring the model, archiving its artifacts, and updating systems to reflect improved versions. The lifecycle is cyclical, and lessons learned from each generation inform the next wave of innovation.

The process begins with problem definition, where teams clarify objectives, success metrics, and the feasibility of using machine learning to address the challenge. This early step establishes the direction for data collection, model selection, and evaluation strategies. Without a well-defined scope, ML projects often struggle with unclear expectations and inconsistent outcomes.

Data collection and preparation form the next stage. Raw data is gathered from various sources, cleaned, labeled, transformed, and engineered into meaningful features. High-quality data is the foundation of high-performance models. This stage often takes the most time because inconsistencies, missing values, and biases must be addressed before training begins.

Model development follows, where data scientists experiment with algorithms, tune hyperparameters, and evaluate different architectures. Experiment tracking plays a crucial role in documenting results and ensuring reproducibility. Models are assessed using appropriate metrics, cross-validation techniques, and testing pipelines to confirm their validity.

After the model is deemed fit for deployment, the focus shifts to operationalization. Deployment strategies vary depending on the system’s requirements—batch processing, streaming pipelines, or real-time APIs. Integration with existing infrastructure must be seamless to ensure low latency, consistent outputs, and scalability. This stage often requires close collaboration between data science, engineering, and DevOps teams.

Once deployed, models enter the monitoring phase. Real-world data can differ significantly from training data, causing performance degradation. Monitoring tools track accuracy, drift, latency, and resource usage. Alerts help teams detect anomalies early, preventing harmful predictions and ensuring that the model continues to function as expected.

Retraining and updates are essential as the environment evolves. Models may require fresh data, new features, or algorithmic adjustments to maintain performance. Retraining cycles can be periodic or triggered automatically when drift thresholds are exceeded. Versioning ensures that teams can roll back to previous models if needed.

Governance and documentation run throughout the lifecycle. Ethical considerations, compliance requirements, and audit trails ensure that models meet regulatory standards and organizational policies. Clear documentation of data sources, assumptions, risks, and design choices increases transparency and accountability.

Eventually, models reach a point where they no longer serve their purpose or become outdated due to better alternatives. Decommissioning involves retiring the model, archiving its artifacts, and updating systems to reflect improved versions. The lifecycle is cyclical, and lessons learned from each generation inform the next wave of innovation.