Human-in-the-Loop Automation Systems (HITL) represent the next evolutionary leap in software development, process automation, and AI-driven decision-making. Traditional automation models rely heavily on rules, algorithms, and machine learning models that function without human supervision. While these systems reduce manual effort, they often suffer from blind spots—ethical decisions, edge cases, safety-critical events, and ambiguous real-world scenarios. HITL bridges this gap by merging the accuracy, speed, and scalability of AI with human judgment, empathy, and contextual understanding. As organizations increasingly integrate AI into high-risk environments like healthcare, finance, manufacturing, and cybersecurity, HITL ensures that humans retain meaningful oversight, preventing failures that could result from unchecked automation. This hybrid intelligence approach guarantees higher accuracy, improved transparency, and better accountability while maintaining the benefits of automation.

Modern automation systems face a fundamental limitation: they are only as reliable as the data on which they are trained. In industries where conditions constantly evolve—such as fraud detection, medical diagnostics, or autonomous vehicles—models require continuous learning, correction, and supervision. Human-in-the-Loop systems empower experts to validate ambiguous model predictions, intervene when automation is uncertain, and adjust workflows when real-world conditions shift unexpectedly. For example, an AI fraud detection system may flag suspicious activity, but a human analyst must review context to avoid wrongful blocking of genuine users. Similarly, an AI medical assistant may produce diagnosis recommendations, but a doctor evaluates risks, comorbidities, and patient-specific nuances. HITL ensures systems evolve correctly over time by allowing humans to feed corrections back into the model, strengthening reliability and minimizing automation errors.

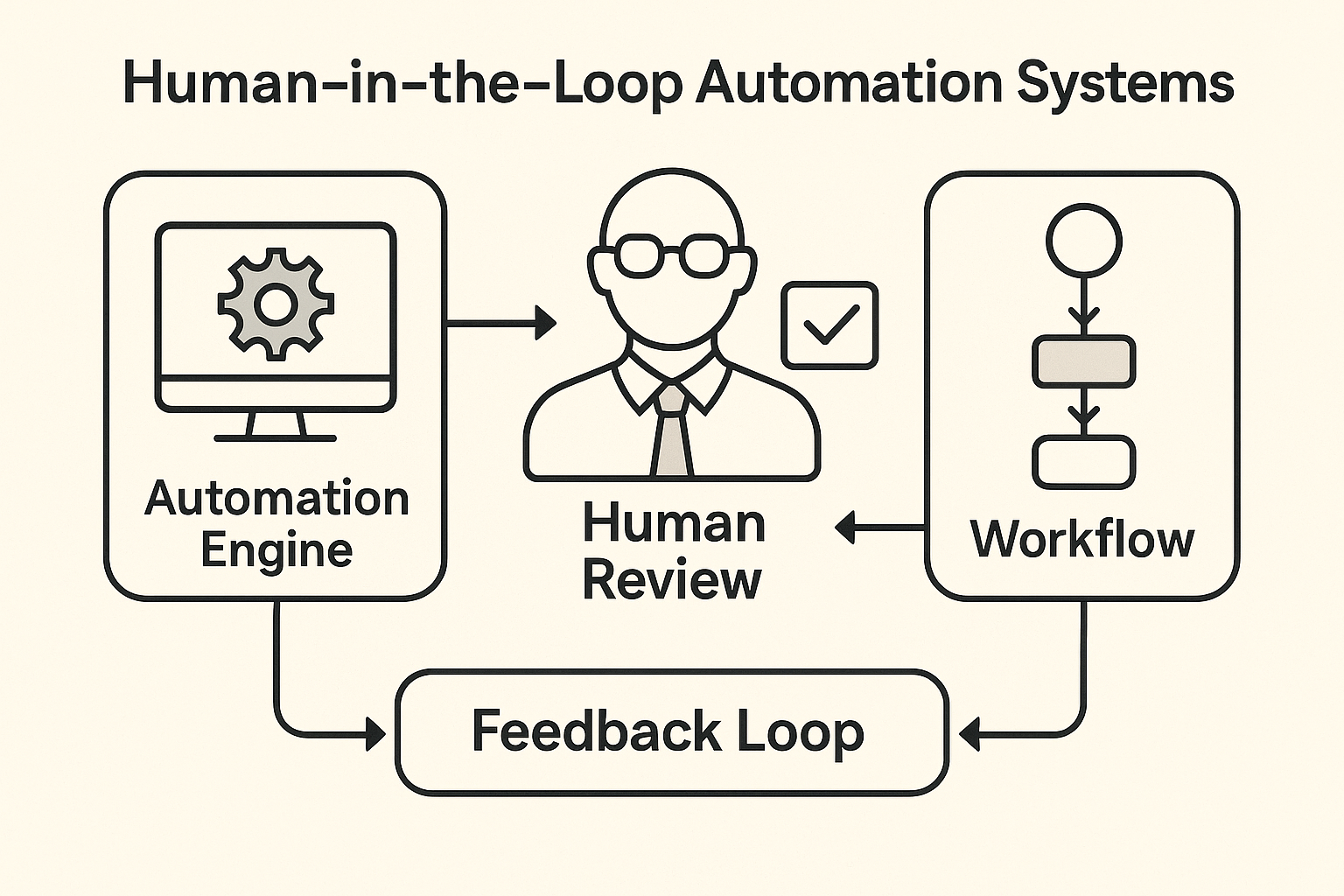

A Human-in-the-Loop automation architecture typically includes three foundational layers: automated AI agents, human validation workflows, and continuous feedback pipelines. The automation engine handles high-volume tasks—classification, prediction, decision routing, or data extraction—while humans intervene only when required. The human review layer may be triggered by uncertainty thresholds, anomaly detection, risk-sensitive operations, or user-initiated correction requests. Once a human validates or corrects the system’s output, this feedback loops back into the model training pipeline, improving accuracy on similar situations. This “correct–retrain–deploy” cycle is what differentiates HITL from static automation. Many organizations use intelligent dashboards, annotation tools, and real-time analytics platforms to streamline human participation. Advanced implementations also include role-based responsibility systems where specific experts handle specific types of AI corrections, ensuring precision and accountability.

The versatility of Human-in-the-Loop automation makes it applicable across nearly every industry. In healthcare, clinicians confirm AI predictions for radiography, pathology, or triaging, ensuring safety-critical accuracy. In finance, analysts review loan approvals and fraud flagging decisions, reducing false positives that inconvenience customers. E-commerce platforms use HITL for categorization, recommendation tuning, and sensitive content moderation. Autonomous systems such as drones, self-driving cars, and robotic arms rely on human oversight during complex decision-making moments, such as navigating unfamiliar terrains or handling hazardous materials. Even social media moderation heavily depends on HITL systems to ensure that machine filters identify potential violations while humans make nuanced, culturally-aware judgments. This widespread adoption demonstrates that HITL is not a fallback method—it is a core requirement for responsible, dependable, and ethical automation.

One of the greatest strengths of Human-in-the-Loop systems lies in their ability to uphold ethical standards. Purely automated systems can unintentionally amplify biases present in training data—leading to unfair decisions in hiring, policing, lending, or online moderation. HITL allows humans to detect bias, validate sensitive decisions, and apply context-sensitive reasoning that AI cannot replicate. It also introduces transparency: when humans intervene, logs capture explanations, rationales, and decision trails, which are crucial for auditability. Regulatory frameworks such as the EU AI Act and emerging global governance policies increasingly mandate human oversight in high-risk automated processes. HITL systems align perfectly with these regulations by ensuring that sensitive decisions are never made blindly. They establish accountability by ensuring that humans—not machines—retain ultimate responsibility over final outcomes in critical scenarios.

Despite their advantages, Human-in-the-Loop systems introduce engineering challenges. One difficulty is ensuring the human’s workload does not become overwhelming. If the automation system frequently seeks confirmation, productivity drops, and the human component becomes a bottleneck. Conversely, if automation oversteps by making decisions without adequate oversight, risk increases. Designing proper confidence thresholds is essential: AI should only escalate uncertain or high-risk cases. Another technical challenge is latency—human validation takes longer than automated processes, making HITL unsuitable for ultra-low-latency scenarios without careful optimization. Data security is another concern: humans reviewing sensitive data must adhere to strict privacy protocols. Finally, incorporating human feedback into model retraining pipelines requires robust ML engineering, version control, and continuous integration processes. Overcoming these challenges requires thoughtful engineering and process design.

The future of HITL automation is shaping toward more dynamic, transparent, and collaborative systems. Explainable AI (XAI) will play a key role by helping humans understand why models made particular decisions, reducing uncertainty during validation. Real-time AI agents will collaborate with human experts, offering suggestions, highlighting anomalies, and predicting areas where intervention might be required. Advanced multimodal systems will enable humans to provide feedback using voice, gestures, or direct annotation. Edge computing will enhance HITL scenarios in robotics, drones, and AR/VR environments by enabling low-latency human oversight. Moreover, as generative AI grows, HITL will be critical in validating AI-generated content, ensuring accuracy, safety, and ethical consistency. The future envisions humans and automation functioning like teammates—each compensating for the other's weaknesses.

Organizations adopting Human-in-the-Loop automation enjoy a unique blend of efficiency and reliability. Processes become faster and scalable while maintaining extremely high quality due to human corrections. HITL dramatically reduces costly errors—especially in regulated industries—by adding expert oversight. It enhances customer trust because users know decisions affecting them aren’t entirely left to algorithms. Continuous feedback loops turn organizations into learning systems: every correction enhances future performance. In addition, teams can deploy AI systems more confidently because HITL prevents catastrophic failures that often discourage automation adoption. By integrating human expertise into machine workflows, organizations position themselves for responsible AI-driven growth.

Human-in-the-Loop Automation Systems represent a balance between innovation and responsibility. Rather than replacing humans, modern automation seeks to augment their intelligence, decision-making, and problem-solving capabilities. The future belongs to hybrid systems where humans supervise automation, AI handles repetitive tasks, and both collaborate fluidly. HITL ensures safety, fairness, reliability, and long-term adaptability—qualities essential for AI-powered systems operating in complex, real-world environments. As industries evolve, HITL will remain a cornerstone of ethical and scalable automation, enabling organizations to innovate while maintaining human control where it matters most.

Modern automation systems face a fundamental limitation: they are only as reliable as the data on which they are trained. In industries where conditions constantly evolve—such as fraud detection, medical diagnostics, or autonomous vehicles—models require continuous learning, correction, and supervision. Human-in-the-Loop systems empower experts to validate ambiguous model predictions, intervene when automation is uncertain, and adjust workflows when real-world conditions shift unexpectedly. For example, an AI fraud detection system may flag suspicious activity, but a human analyst must review context to avoid wrongful blocking of genuine users. Similarly, an AI medical assistant may produce diagnosis recommendations, but a doctor evaluates risks, comorbidities, and patient-specific nuances. HITL ensures systems evolve correctly over time by allowing humans to feed corrections back into the model, strengthening reliability and minimizing automation errors.

A Human-in-the-Loop automation architecture typically includes three foundational layers: automated AI agents, human validation workflows, and continuous feedback pipelines. The automation engine handles high-volume tasks—classification, prediction, decision routing, or data extraction—while humans intervene only when required. The human review layer may be triggered by uncertainty thresholds, anomaly detection, risk-sensitive operations, or user-initiated correction requests. Once a human validates or corrects the system’s output, this feedback loops back into the model training pipeline, improving accuracy on similar situations. This “correct–retrain–deploy” cycle is what differentiates HITL from static automation. Many organizations use intelligent dashboards, annotation tools, and real-time analytics platforms to streamline human participation. Advanced implementations also include role-based responsibility systems where specific experts handle specific types of AI corrections, ensuring precision and accountability.

The versatility of Human-in-the-Loop automation makes it applicable across nearly every industry. In healthcare, clinicians confirm AI predictions for radiography, pathology, or triaging, ensuring safety-critical accuracy. In finance, analysts review loan approvals and fraud flagging decisions, reducing false positives that inconvenience customers. E-commerce platforms use HITL for categorization, recommendation tuning, and sensitive content moderation. Autonomous systems such as drones, self-driving cars, and robotic arms rely on human oversight during complex decision-making moments, such as navigating unfamiliar terrains or handling hazardous materials. Even social media moderation heavily depends on HITL systems to ensure that machine filters identify potential violations while humans make nuanced, culturally-aware judgments. This widespread adoption demonstrates that HITL is not a fallback method—it is a core requirement for responsible, dependable, and ethical automation.

One of the greatest strengths of Human-in-the-Loop systems lies in their ability to uphold ethical standards. Purely automated systems can unintentionally amplify biases present in training data—leading to unfair decisions in hiring, policing, lending, or online moderation. HITL allows humans to detect bias, validate sensitive decisions, and apply context-sensitive reasoning that AI cannot replicate. It also introduces transparency: when humans intervene, logs capture explanations, rationales, and decision trails, which are crucial for auditability. Regulatory frameworks such as the EU AI Act and emerging global governance policies increasingly mandate human oversight in high-risk automated processes. HITL systems align perfectly with these regulations by ensuring that sensitive decisions are never made blindly. They establish accountability by ensuring that humans—not machines—retain ultimate responsibility over final outcomes in critical scenarios.

Despite their advantages, Human-in-the-Loop systems introduce engineering challenges. One difficulty is ensuring the human’s workload does not become overwhelming. If the automation system frequently seeks confirmation, productivity drops, and the human component becomes a bottleneck. Conversely, if automation oversteps by making decisions without adequate oversight, risk increases. Designing proper confidence thresholds is essential: AI should only escalate uncertain or high-risk cases. Another technical challenge is latency—human validation takes longer than automated processes, making HITL unsuitable for ultra-low-latency scenarios without careful optimization. Data security is another concern: humans reviewing sensitive data must adhere to strict privacy protocols. Finally, incorporating human feedback into model retraining pipelines requires robust ML engineering, version control, and continuous integration processes. Overcoming these challenges requires thoughtful engineering and process design.

The future of HITL automation is shaping toward more dynamic, transparent, and collaborative systems. Explainable AI (XAI) will play a key role by helping humans understand why models made particular decisions, reducing uncertainty during validation. Real-time AI agents will collaborate with human experts, offering suggestions, highlighting anomalies, and predicting areas where intervention might be required. Advanced multimodal systems will enable humans to provide feedback using voice, gestures, or direct annotation. Edge computing will enhance HITL scenarios in robotics, drones, and AR/VR environments by enabling low-latency human oversight. Moreover, as generative AI grows, HITL will be critical in validating AI-generated content, ensuring accuracy, safety, and ethical consistency. The future envisions humans and automation functioning like teammates—each compensating for the other's weaknesses.

Organizations adopting Human-in-the-Loop automation enjoy a unique blend of efficiency and reliability. Processes become faster and scalable while maintaining extremely high quality due to human corrections. HITL dramatically reduces costly errors—especially in regulated industries—by adding expert oversight. It enhances customer trust because users know decisions affecting them aren’t entirely left to algorithms. Continuous feedback loops turn organizations into learning systems: every correction enhances future performance. In addition, teams can deploy AI systems more confidently because HITL prevents catastrophic failures that often discourage automation adoption. By integrating human expertise into machine workflows, organizations position themselves for responsible AI-driven growth.

Human-in-the-Loop Automation Systems represent a balance between innovation and responsibility. Rather than replacing humans, modern automation seeks to augment their intelligence, decision-making, and problem-solving capabilities. The future belongs to hybrid systems where humans supervise automation, AI handles repetitive tasks, and both collaborate fluidly. HITL ensures safety, fairness, reliability, and long-term adaptability—qualities essential for AI-powered systems operating in complex, real-world environments. As industries evolve, HITL will remain a cornerstone of ethical and scalable automation, enabling organizations to innovate while maintaining human control where it matters most.