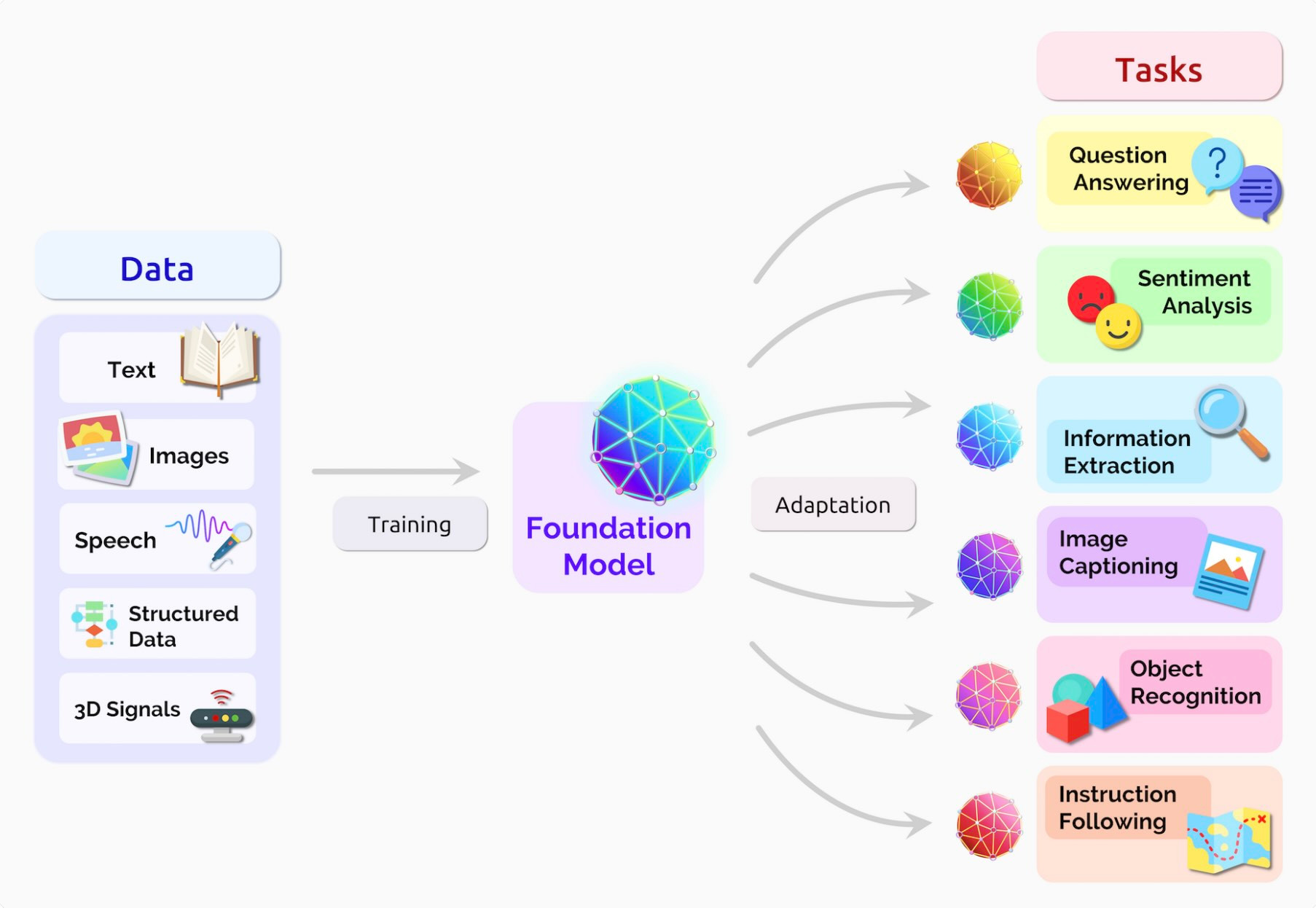

Foundation Models & LLM-Powered Analytics represent one of the most transformative shifts in modern data science, fundamentally changing how organizations extract insights, automate decision-making, and operationalize intelligence at scale. Foundation models are extremely large-scale neural networks—such as GPT, PaLM, Claude, LLaMA, Gemini, and other multimodal architectures—that have been trained on massive, diverse datasets and can be adapted to a wide range of downstream tasks. Unlike traditional machine learning models, which require task-specific data and domain-specific pipelines, foundation models serve as general-purpose engines of reasoning, language understanding, pattern recognition, and multimodal comprehension. When integrated into enterprise analytics workflows, these models enable organizations to move from descriptive dashboards to intelligent systems capable of generating insights, summarizing trends, predicting scenarios, automating workflows, and interacting with data conversationally. LLM-powered analytics thus represent a new era where AI not only reports on data but actively collaborates with humans to derive meaning and inform strategic decisions.

At the core of this transformation is the concept of transferability and generalization. Foundation models are trained on trillions of tokens and billions of multimodal samples, learning patterns that extend beyond any single domain. As a result, they possess robust language understanding, reasoning capabilities, and contextual knowledge that were previously unattainable in analytics systems. Instead of building separate models for text classification, summarization, anomaly detection, forecasting, and conversational querying, enterprises can fine-tune or prompt a single foundation model to handle all these tasks. This drastically reduces development overhead, accelerates deployment, and democratizes analytics by enabling analysts, business leaders, and non-technical teams to interact with complex datasets using natural language. The foundation model becomes a centralized intelligence layer, orchestrating insights across departments, data platforms, and business operations.

One of the most significant advances enabled by foundation models is Natural Language Analytics, where users can ask business questions conversationally and receive accurate, context-aware insights. Traditional BI tools require SQL queries, dashboards, or complex filtering steps, but LLM-powered analytics interfaces allow users to ask: “What caused our sales dip in Q2?” or “Summarize customer sentiment from the last month’s reviews.” The model interprets the question, fetches relevant data, performs the required analysis, and generates a human-readable explanation. This revolutionizes decision-making by removing the technical barriers between the data and the decision-maker. Modern analytics platforms increasingly embed LLM agents that autonomously browse data warehouses, logs, CRM platforms, and enterprise documents to generate actionable insights, highlight anomalies, and even recommend operational changes.

Another key innovation lies in autonomous analytics workflows, where foundation models manage continuous monitoring, forecasting, and alerting without manual intervention. Instead of static dashboards that require human inspection, LLM-powered systems automatically scan for unusual patterns in sales, supply chain activity, user behavior, cybersecurity logs, or operational metrics. Upon detecting anomalies, the model can generate root-cause analyses, propose corrective actions, and communicate alerts through natural-language notifications. Some enterprise systems also deploy LLM-powered agents that trigger workflows—such as reordering inventory, adjusting marketing campaigns, updating pricing models, or reallocating cloud resources—based on predictive insights. This creates a proactive analytics ecosystem where AI acts as a decision co-pilot rather than a passive reporting tool.

Foundation models also enhance multimodal analytics, enabling seamless integration of text, images, audio, video, tabular data, sensor streams, and geospatial sources. Traditional analytics tools struggle with unstructured data, but LLMs and vision-language models can interpret product images, read scanned documents, analyze customer calls, understand blueprints, evaluate medical images, and extract information from charts. This multimodal intelligence unlocks entirely new analytical capabilities—for example, retailers can analyze shelf images to detect stock-outs, banks can automate document verification using scanned forms, and manufacturers can interpret machine vibration audio for predictive maintenance. When combined with structured data from warehouses, multimodal analytics helps organizations build holistic intelligence systems that understand context-rich environments more deeply than ever before.

A critical component of foundation model integration is fine-tuning and personalization. While base LLMs provide broad reasoning capabilities, enterprise analytics often require domain-specific knowledge—such as financial regulations, medical terminology, supply chain networks, industrial systems, or internal business workflows. Fine-tuning allows organizations to specialize foundation models using proprietary datasets, improving accuracy and domain alignment. Model adapters, low-rank adaptation (LoRA), RAG (Retrieval-Augmented Generation), and vector databases further enhance model intelligence by grounding responses in real-time organizational data. With RAG-powered analytics, LLMs can pull information from data warehouses, cloud storage, internal documents, and APIs to produce precise, up-to-date insights. This hybrid approach eliminates hallucination risks and transforms the model into a reliable enterprise analytics engine.

However, LLM-powered analytics introduces new challenges in governance, ethics, compliance, and security. As models integrate deeper into business decision-making processes, ensuring transparency, trustworthiness, and regulatory compliance becomes essential. Organizations must implement guardrails to prevent hallucinated outputs, enforce data-access controls to protect sensitive information, and establish audit trails to track how and why AI-generated insights were produced. Data lineage, privacy-preserving architectures, role-based permissions, and policy-based filters help ensure that foundation models operate responsibly. Fairness and bias mitigation remain crucial, especially in sensitive industries like finance, hiring, healthcare, and public governance. Developing robust AI governance frameworks allows organizations to harness the power of LLM analytics while ensuring ethical and compliant usage.

The future of foundation models in analytics points toward fully autonomous decision intelligence systems, where AI agents collaborate, communicate, and operate across organizational functions. LLMs combined with multi-agent frameworks will enable fleets of specialized AI assistants—each focusing on finance, supply chain, customer analytics, HR, cybersecurity, or operations. These agents will coordinate using shared memory, negotiate priorities, and generate unified reports that combine insights across domains. With the integration of real-time data streams, edge analytics, and reinforcement learning, future systems may autonomously optimize logistics networks, adjust financial forecasts, manage cloud infrastructure, or orchestrate smart factories. As foundation models evolve to become more reasoning-focused, grounded, and multimodal, they will become core engines powering digital enterprises.

Ultimately, Foundation Models & LLM-Powered Analytics represent a paradigm shift in how organizations understand, analyze, and act upon data. They move analytics from static dashboards to dynamic intelligence systems capable of conversation, reasoning, and autonomous decision-making. Organizations that embrace this shift gain unparalleled insight velocity, business agility, and competitive advantage. As foundation models continue scaling, becoming more efficient, interpretable, and specialized, they will redefine the entire analytics lifecycle—from ingestion and exploration to forecasting, explanation, and automation. The future of analytics is not just data-driven—it is AI-aligned, context-aware, collaborative, and deeply integrated into every aspect of organizational strategy.

At the core of this transformation is the concept of transferability and generalization. Foundation models are trained on trillions of tokens and billions of multimodal samples, learning patterns that extend beyond any single domain. As a result, they possess robust language understanding, reasoning capabilities, and contextual knowledge that were previously unattainable in analytics systems. Instead of building separate models for text classification, summarization, anomaly detection, forecasting, and conversational querying, enterprises can fine-tune or prompt a single foundation model to handle all these tasks. This drastically reduces development overhead, accelerates deployment, and democratizes analytics by enabling analysts, business leaders, and non-technical teams to interact with complex datasets using natural language. The foundation model becomes a centralized intelligence layer, orchestrating insights across departments, data platforms, and business operations.

One of the most significant advances enabled by foundation models is Natural Language Analytics, where users can ask business questions conversationally and receive accurate, context-aware insights. Traditional BI tools require SQL queries, dashboards, or complex filtering steps, but LLM-powered analytics interfaces allow users to ask: “What caused our sales dip in Q2?” or “Summarize customer sentiment from the last month’s reviews.” The model interprets the question, fetches relevant data, performs the required analysis, and generates a human-readable explanation. This revolutionizes decision-making by removing the technical barriers between the data and the decision-maker. Modern analytics platforms increasingly embed LLM agents that autonomously browse data warehouses, logs, CRM platforms, and enterprise documents to generate actionable insights, highlight anomalies, and even recommend operational changes.

Another key innovation lies in autonomous analytics workflows, where foundation models manage continuous monitoring, forecasting, and alerting without manual intervention. Instead of static dashboards that require human inspection, LLM-powered systems automatically scan for unusual patterns in sales, supply chain activity, user behavior, cybersecurity logs, or operational metrics. Upon detecting anomalies, the model can generate root-cause analyses, propose corrective actions, and communicate alerts through natural-language notifications. Some enterprise systems also deploy LLM-powered agents that trigger workflows—such as reordering inventory, adjusting marketing campaigns, updating pricing models, or reallocating cloud resources—based on predictive insights. This creates a proactive analytics ecosystem where AI acts as a decision co-pilot rather than a passive reporting tool.

Foundation models also enhance multimodal analytics, enabling seamless integration of text, images, audio, video, tabular data, sensor streams, and geospatial sources. Traditional analytics tools struggle with unstructured data, but LLMs and vision-language models can interpret product images, read scanned documents, analyze customer calls, understand blueprints, evaluate medical images, and extract information from charts. This multimodal intelligence unlocks entirely new analytical capabilities—for example, retailers can analyze shelf images to detect stock-outs, banks can automate document verification using scanned forms, and manufacturers can interpret machine vibration audio for predictive maintenance. When combined with structured data from warehouses, multimodal analytics helps organizations build holistic intelligence systems that understand context-rich environments more deeply than ever before.

A critical component of foundation model integration is fine-tuning and personalization. While base LLMs provide broad reasoning capabilities, enterprise analytics often require domain-specific knowledge—such as financial regulations, medical terminology, supply chain networks, industrial systems, or internal business workflows. Fine-tuning allows organizations to specialize foundation models using proprietary datasets, improving accuracy and domain alignment. Model adapters, low-rank adaptation (LoRA), RAG (Retrieval-Augmented Generation), and vector databases further enhance model intelligence by grounding responses in real-time organizational data. With RAG-powered analytics, LLMs can pull information from data warehouses, cloud storage, internal documents, and APIs to produce precise, up-to-date insights. This hybrid approach eliminates hallucination risks and transforms the model into a reliable enterprise analytics engine.

However, LLM-powered analytics introduces new challenges in governance, ethics, compliance, and security. As models integrate deeper into business decision-making processes, ensuring transparency, trustworthiness, and regulatory compliance becomes essential. Organizations must implement guardrails to prevent hallucinated outputs, enforce data-access controls to protect sensitive information, and establish audit trails to track how and why AI-generated insights were produced. Data lineage, privacy-preserving architectures, role-based permissions, and policy-based filters help ensure that foundation models operate responsibly. Fairness and bias mitigation remain crucial, especially in sensitive industries like finance, hiring, healthcare, and public governance. Developing robust AI governance frameworks allows organizations to harness the power of LLM analytics while ensuring ethical and compliant usage.

The future of foundation models in analytics points toward fully autonomous decision intelligence systems, where AI agents collaborate, communicate, and operate across organizational functions. LLMs combined with multi-agent frameworks will enable fleets of specialized AI assistants—each focusing on finance, supply chain, customer analytics, HR, cybersecurity, or operations. These agents will coordinate using shared memory, negotiate priorities, and generate unified reports that combine insights across domains. With the integration of real-time data streams, edge analytics, and reinforcement learning, future systems may autonomously optimize logistics networks, adjust financial forecasts, manage cloud infrastructure, or orchestrate smart factories. As foundation models evolve to become more reasoning-focused, grounded, and multimodal, they will become core engines powering digital enterprises.

Ultimately, Foundation Models & LLM-Powered Analytics represent a paradigm shift in how organizations understand, analyze, and act upon data. They move analytics from static dashboards to dynamic intelligence systems capable of conversation, reasoning, and autonomous decision-making. Organizations that embrace this shift gain unparalleled insight velocity, business agility, and competitive advantage. As foundation models continue scaling, becoming more efficient, interpretable, and specialized, they will redefine the entire analytics lifecycle—from ingestion and exploration to forecasting, explanation, and automation. The future of analytics is not just data-driven—it is AI-aligned, context-aware, collaborative, and deeply integrated into every aspect of organizational strategy.