Federated learning is a distributed machine learning approach that enables models to be trained across multiple devices or servers without requiring raw data to be shared or centralized. Instead of collecting data in a single location, the training process happens locally on each device using its own data. This decentralized approach fundamentally changes how machine learning systems are built, especially in environments where data privacy and security are critical.

One of the key motivations behind federated learning is protecting sensitive user information. Since raw data never leaves the user’s device, personal or confidential data remains local, significantly reducing the risk of data breaches and unauthorized access. This makes federated learning especially valuable in industries that handle sensitive data.

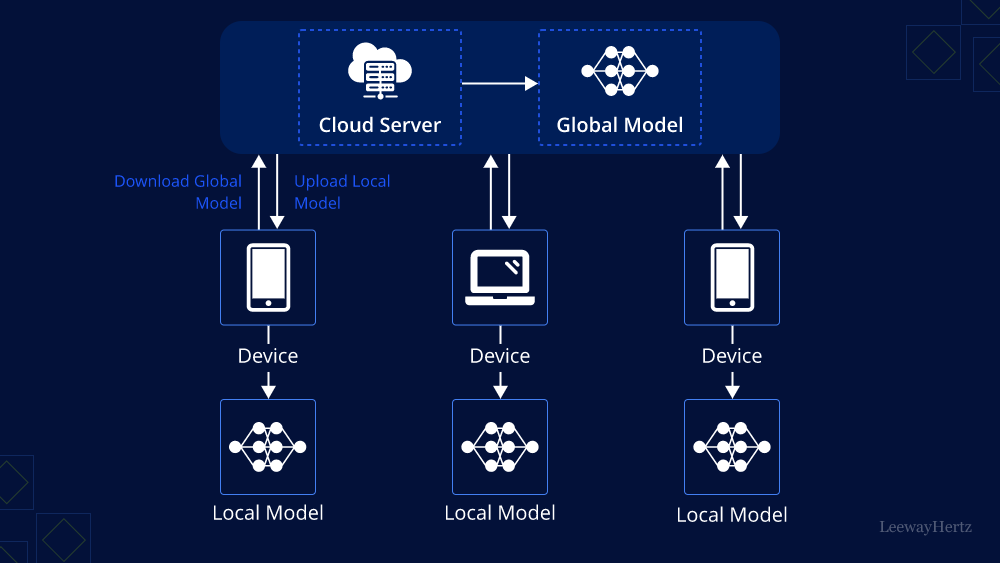

In a typical federated learning workflow, each participating device trains a local version of the model using its own dataset. After local training, only the model updates—such as gradients or weight changes—are sent to a central server. The server aggregates these updates from many devices to create an improved global model, which is then redistributed to the devices for the next training round.

Federated learning is widely adopted in applications where direct data sharing is restricted or impractical. Common use cases include mobile keyboards that learn user typing patterns, healthcare systems that analyze patient data across hospitals, and financial services that detect fraud while preserving customer privacy. These applications benefit from collaborative learning without exposing raw data.

A major advantage of federated learning is its alignment with data privacy regulations. Since data remains on local devices, it supports compliance with regulations such as GDPR and other privacy laws. This makes federated learning a strong foundation for privacy-preserving artificial intelligence development.

Despite its benefits, federated learning introduces several technical challenges. Communication overhead can be significant because model updates must be exchanged frequently between devices and servers. Additionally, device heterogeneity—differences in hardware, connectivity, and availability—can complicate training. Variations in data quality and distribution across devices also affect model performance.

Security is another important concern in federated learning systems. Threats such as model poisoning, where malicious participants send harmful updates, and data inference attacks, where attackers attempt to extract sensitive information from model updates, must be carefully mitigated to ensure system reliability and trust.

To address these challenges, advanced techniques such as secure aggregation, encryption, differential privacy, and efficient communication protocols are used. These methods help protect model updates, reduce communication costs, and improve the overall robustness of federated learning systems.

Overall, federated learning represents a significant shift toward decentralized, collaborative, and privacy-focused AI. By enabling collective model training without compromising sensitive data, it offers a powerful solution for building intelligent systems that respect user privacy while benefiting from large-scale learning.

One of the key motivations behind federated learning is protecting sensitive user information. Since raw data never leaves the user’s device, personal or confidential data remains local, significantly reducing the risk of data breaches and unauthorized access. This makes federated learning especially valuable in industries that handle sensitive data.

In a typical federated learning workflow, each participating device trains a local version of the model using its own dataset. After local training, only the model updates—such as gradients or weight changes—are sent to a central server. The server aggregates these updates from many devices to create an improved global model, which is then redistributed to the devices for the next training round.

Federated learning is widely adopted in applications where direct data sharing is restricted or impractical. Common use cases include mobile keyboards that learn user typing patterns, healthcare systems that analyze patient data across hospitals, and financial services that detect fraud while preserving customer privacy. These applications benefit from collaborative learning without exposing raw data.

A major advantage of federated learning is its alignment with data privacy regulations. Since data remains on local devices, it supports compliance with regulations such as GDPR and other privacy laws. This makes federated learning a strong foundation for privacy-preserving artificial intelligence development.

Despite its benefits, federated learning introduces several technical challenges. Communication overhead can be significant because model updates must be exchanged frequently between devices and servers. Additionally, device heterogeneity—differences in hardware, connectivity, and availability—can complicate training. Variations in data quality and distribution across devices also affect model performance.

Security is another important concern in federated learning systems. Threats such as model poisoning, where malicious participants send harmful updates, and data inference attacks, where attackers attempt to extract sensitive information from model updates, must be carefully mitigated to ensure system reliability and trust.

To address these challenges, advanced techniques such as secure aggregation, encryption, differential privacy, and efficient communication protocols are used. These methods help protect model updates, reduce communication costs, and improve the overall robustness of federated learning systems.

Overall, federated learning represents a significant shift toward decentralized, collaborative, and privacy-focused AI. By enabling collective model training without compromising sensitive data, it offers a powerful solution for building intelligent systems that respect user privacy while benefiting from large-scale learning.