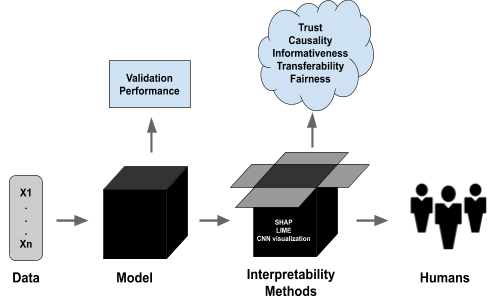

Explainable Artificial Intelligence (XAI) refers to a set of methods and techniques that make the decisions and behavior of AI models understandable to humans. Modern AI systems—especially deep learning models—are often seen as black boxes because they can give highly accurate results but do not explain how they reached those conclusions. XAI solves this by providing transparency, clarity, and justification for AI predictions. This transparency is essential for trust, accountability, debugging, compliance, and safe adoption of AI in sensitive sectors such as healthcare, finance, defense, and autonomous vehicles.

XAI helps answer questions like: “Why did the model make this prediction?”, “What features affected the decision?”, or “Can we trust this output?”. By exposing the logic behind AI decisions, XAI bridges the gap between complex algorithms and human understanding. It improves user confidence, helps developers identify model errors or biases, and supports fair and ethical AI deployment. With strict regulations like GDPR and emerging AI policies worldwide, organizations must ensure AI systems are interpretable and auditable.

There are two main categories of explainability: Intrinsic and Post-hoc. Intrinsic explainability refers to models that are naturally understandable, such as decision trees, linear regression, and rule-based systems. Post-hoc explainability refers to techniques applied after the model makes a prediction; these include LIME, SHAP, Grad-CAM, and feature importance visualization. These tools help interpret even complex models like neural networks by showing which features influenced predictions and how strongly.

XAI also includes local and global explanations. Local explanations clarify a single prediction—for example, why a bank loan was approved for one customer but rejected for another. Global explanations describe the overall behavior of the AI model across all data. This enables analysts to understand long-term patterns, feature dependency, and decision boundaries, making the system more predictable and reliable.

Explainable AI plays a crucial role in identifying and reducing biases. AI systems trained on biased data may unintentionally discriminate against groups. XAI tools reveal these biases through transparent analysis, helping organizations improve fairness. Moreover, XAI enhances safety: in autonomous cars or medical diagnostic systems, seeing why the AI acted a certain way is critical for detecting risks before they cause harm.

In industry, XAI is widely used for fraud detection, model auditing, credit scoring, medical diagnosis, recommendation systems, and legal decision support. These sectors require justification for automated decisions, and XAI provides the needed level of traceability. As AI systems continue to grow more complex, the need for explainability increases to meet ethical, legal, and operational standards.

Advantages

1)Improves trust and adoption of AI

2)Helps detect bias and unfair outcomes

3)Enhances safety and reliability

4)Facilitates regulatory compliance

5)Provides insights for model optimization

Disadvantages

1)Some complex models are very hard to explain

2)Explainability techniques may be approximate, not perfect

3)May reduce accuracy if simpler models are used

4)Can expose sensitive internal logic, raising security concerns

XAI helps answer questions like: “Why did the model make this prediction?”, “What features affected the decision?”, or “Can we trust this output?”. By exposing the logic behind AI decisions, XAI bridges the gap between complex algorithms and human understanding. It improves user confidence, helps developers identify model errors or biases, and supports fair and ethical AI deployment. With strict regulations like GDPR and emerging AI policies worldwide, organizations must ensure AI systems are interpretable and auditable.

There are two main categories of explainability: Intrinsic and Post-hoc. Intrinsic explainability refers to models that are naturally understandable, such as decision trees, linear regression, and rule-based systems. Post-hoc explainability refers to techniques applied after the model makes a prediction; these include LIME, SHAP, Grad-CAM, and feature importance visualization. These tools help interpret even complex models like neural networks by showing which features influenced predictions and how strongly.

XAI also includes local and global explanations. Local explanations clarify a single prediction—for example, why a bank loan was approved for one customer but rejected for another. Global explanations describe the overall behavior of the AI model across all data. This enables analysts to understand long-term patterns, feature dependency, and decision boundaries, making the system more predictable and reliable.

Explainable AI plays a crucial role in identifying and reducing biases. AI systems trained on biased data may unintentionally discriminate against groups. XAI tools reveal these biases through transparent analysis, helping organizations improve fairness. Moreover, XAI enhances safety: in autonomous cars or medical diagnostic systems, seeing why the AI acted a certain way is critical for detecting risks before they cause harm.

In industry, XAI is widely used for fraud detection, model auditing, credit scoring, medical diagnosis, recommendation systems, and legal decision support. These sectors require justification for automated decisions, and XAI provides the needed level of traceability. As AI systems continue to grow more complex, the need for explainability increases to meet ethical, legal, and operational standards.

Advantages

1)Improves trust and adoption of AI

2)Helps detect bias and unfair outcomes

3)Enhances safety and reliability

4)Facilitates regulatory compliance

5)Provides insights for model optimization

Disadvantages

1)Some complex models are very hard to explain

2)Explainability techniques may be approximate, not perfect

3)May reduce accuracy if simpler models are used

4)Can expose sensitive internal logic, raising security concerns