In the last few years, deepfake technology has transformed from a niche AI experiment into one of the most dangerous cybersecurity threats. Deepfakes use deep learning, GANs (Generative Adversarial Networks), and advanced neural networks to create extremely realistic synthetic media—videos, images, and voices—that look and sound authentic. This technology is already being exploited by attackers for fraud, impersonation, blackmail, and misinformation campaigns. As deepfake tools become easier to access and require no technical expertise, individuals, companies, governments, and even global institutions face new risks that traditional security frameworks cannot effectively handle.

The major danger of deepfakes comes from their ability to convincingly mimic real humans. Attackers can now clone a CEO’s voice to approve fake financial transfers, imitate a political leader to spread false information, or generate compromising videos for extortion. Voice-cloning tools can create a believable 30-second audio sample from just a few seconds of real speech. Worse, video deepfakes require only a few reference images to produce realistic facial movements and expressions. This level of accuracy creates a world where “seeing is believing” no longer applies and trust in digital media becomes fragile.

AI-driven attacks expand beyond deepfakes, using machine learning models to automate hacking attempts, identify vulnerabilities, and execute adaptive attacks that improve over time. AI can analyze network behavior, bypass CAPTCHA systems, craft spear-phishing emails with precise emotional language, or create malware that evolves to avoid detection. Attackers now use AI as a weapon to scale operations faster, reduce manual effort, and target victims with pinpoint precision. AI-powered phishing scams, for example, are almost indistinguishable from legitimate emails and can be customized for each employee inside a company.

Deepfake-based social engineering is already impacting businesses. A well-known case involved a UK energy company where the CEO’s voice was deepfaked to trick an employee into transferring €220,000. Such attacks are extremely difficult to detect because they bypass technical firewalls and exploit human psychology. With AI generating perfect accents, tone, hesitation, and breathing patterns, employees struggle to differentiate real voices from synthetic ones. This makes traditional training-based awareness insufficient without advanced authentication layers.

Misinformation is another dangerous area affected by deepfakes. Attackers can release fake political speeches, fabricated news videos, or manipulated interviews capable of influencing public opinion. During crises, deepfakes can accelerate panic or distort facts, creating confusion at a massive scale. Deepfake propaganda campaigns can destabilize elections, manipulate markets, or damage the reputation of public figures. The combination of social media speed and AI-generated realism gives attackers a powerful tool to shape narratives and influence societal behavior.

In the corporate world, AI-driven attacks threaten data privacy, business continuity, and brand trust. Deepfake customer voices can bypass call-center verification. Fraudsters can generate fake ID documents with AI-enhanced facial features that fool both humans and automated KYC systems. AI-powered malware can infiltrate internal networks, analyze defense patterns, and adjust its behavior dynamically to stay undetected. With AI now capable of writing malicious scripts or modifying payloads, the cybersecurity skill gap between attackers and defenders is growing wider.

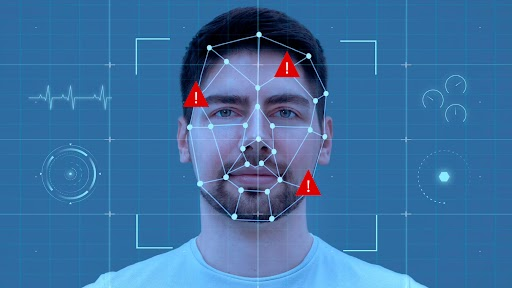

However, defenders are not powerless. AI is also being used to detect deepfakes by analyzing pixel inconsistencies, unnatural facial movements, temporal artifacts, and audio-visual mismatches. Modern deepfake detection tools rely on neural networks trained to recognize subtle signs that humans miss. Security teams are integrating behavior analytics, biometric verification, and real-time liveness detection to counter AI-driven fraud. Multi-factor authentication, digital watermarking, secure digital identity frameworks, and blockchain-based verification systems are becoming essential defenses against synthetic media exploitation.

Despite all advancements, the future challenge remains: deepfakes will continue to improve, becoming harder to detect as they learn from detection models themselves. This creates a cybersecurity arms race between attackers and defenders. Organizations must adopt policies for handling synthetic media, invest in AI-driven threat monitoring, and educate employees about the psychological tricks used in deepfake attacks. Governments and tech companies must work together to create stronger regulations, traceability standards, and accountability frameworks for AI-generated content.

Ultimately, combating deepfake and AI-driven attacks requires a combination of advanced technologies, strict governance, and widespread awareness. As AI becomes more powerful, the line between real and artificial will blur even further. The goal is not to eliminate deepfakes—they are also used in entertainment, education, and accessibility—but to ensure they do not threaten trust, security, and societal stability. Building robust defenses today is the only way to stay ahead of tomorrow’s AI-powered cyber threats.

The major danger of deepfakes comes from their ability to convincingly mimic real humans. Attackers can now clone a CEO’s voice to approve fake financial transfers, imitate a political leader to spread false information, or generate compromising videos for extortion. Voice-cloning tools can create a believable 30-second audio sample from just a few seconds of real speech. Worse, video deepfakes require only a few reference images to produce realistic facial movements and expressions. This level of accuracy creates a world where “seeing is believing” no longer applies and trust in digital media becomes fragile.

AI-driven attacks expand beyond deepfakes, using machine learning models to automate hacking attempts, identify vulnerabilities, and execute adaptive attacks that improve over time. AI can analyze network behavior, bypass CAPTCHA systems, craft spear-phishing emails with precise emotional language, or create malware that evolves to avoid detection. Attackers now use AI as a weapon to scale operations faster, reduce manual effort, and target victims with pinpoint precision. AI-powered phishing scams, for example, are almost indistinguishable from legitimate emails and can be customized for each employee inside a company.

Deepfake-based social engineering is already impacting businesses. A well-known case involved a UK energy company where the CEO’s voice was deepfaked to trick an employee into transferring €220,000. Such attacks are extremely difficult to detect because they bypass technical firewalls and exploit human psychology. With AI generating perfect accents, tone, hesitation, and breathing patterns, employees struggle to differentiate real voices from synthetic ones. This makes traditional training-based awareness insufficient without advanced authentication layers.

Misinformation is another dangerous area affected by deepfakes. Attackers can release fake political speeches, fabricated news videos, or manipulated interviews capable of influencing public opinion. During crises, deepfakes can accelerate panic or distort facts, creating confusion at a massive scale. Deepfake propaganda campaigns can destabilize elections, manipulate markets, or damage the reputation of public figures. The combination of social media speed and AI-generated realism gives attackers a powerful tool to shape narratives and influence societal behavior.

In the corporate world, AI-driven attacks threaten data privacy, business continuity, and brand trust. Deepfake customer voices can bypass call-center verification. Fraudsters can generate fake ID documents with AI-enhanced facial features that fool both humans and automated KYC systems. AI-powered malware can infiltrate internal networks, analyze defense patterns, and adjust its behavior dynamically to stay undetected. With AI now capable of writing malicious scripts or modifying payloads, the cybersecurity skill gap between attackers and defenders is growing wider.

However, defenders are not powerless. AI is also being used to detect deepfakes by analyzing pixel inconsistencies, unnatural facial movements, temporal artifacts, and audio-visual mismatches. Modern deepfake detection tools rely on neural networks trained to recognize subtle signs that humans miss. Security teams are integrating behavior analytics, biometric verification, and real-time liveness detection to counter AI-driven fraud. Multi-factor authentication, digital watermarking, secure digital identity frameworks, and blockchain-based verification systems are becoming essential defenses against synthetic media exploitation.

Despite all advancements, the future challenge remains: deepfakes will continue to improve, becoming harder to detect as they learn from detection models themselves. This creates a cybersecurity arms race between attackers and defenders. Organizations must adopt policies for handling synthetic media, invest in AI-driven threat monitoring, and educate employees about the psychological tricks used in deepfake attacks. Governments and tech companies must work together to create stronger regulations, traceability standards, and accountability frameworks for AI-generated content.

Ultimately, combating deepfake and AI-driven attacks requires a combination of advanced technologies, strict governance, and widespread awareness. As AI becomes more powerful, the line between real and artificial will blur even further. The goal is not to eliminate deepfakes—they are also used in entertainment, education, and accessibility—but to ensure they do not threaten trust, security, and societal stability. Building robust defenses today is the only way to stay ahead of tomorrow’s AI-powered cyber threats.